Abstract

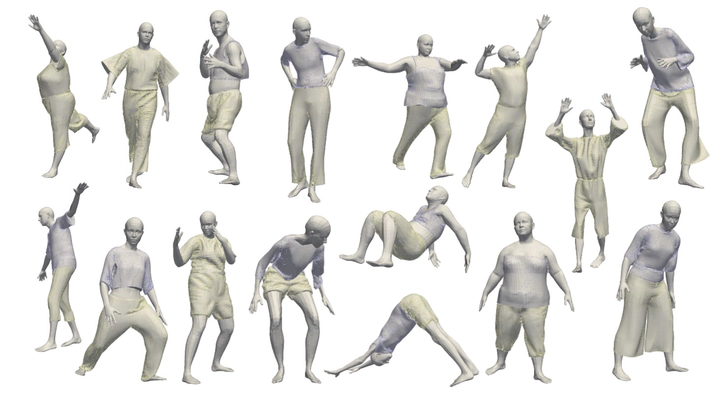

The 3D cloth segmentation task is particularly challenging due to the extreme variation of shapes, even among the same category of clothes. Several data-driven methods try to cope with this problem but they have to face the lack of available data capable to generalize to the variety of real-world data. For this reason, we present GIM3D (Garments In Motion 3D), a synthetic dataset of clothed 3D human characters in different poses. The over 4000 3D models in this dataset are produced by a physical simulation of clothes with different fabrics, sizes, and tightness, using animated human avatars having a large variety of shapes. Our dataset is composed of single meshes created to simulate 3D scans, with labels for the separate clothes and the visible body parts. We also provide an evaluation of the use of GIM3D as a training set on garment segmentation tasks using state-of-the-art data-driven methods for both meshes and point clouds.